How far should we tolerate AI lies? Measures to prevent RAG hallucination that IT systems should know

Telling complete lies in a plausible context. This unique AI behavior is valued as "creativity" in creative applications, but it poses a risk that cannot be overlooked when it comes to corporate knowledge search and business support, where accuracy is vital. For IT departments responsible for managing internal systems and digital transformation personnel responsible for company-wide reform, how to control this "AI lies" and reduce them to a level that can withstand actual operation is an urgent issue.

In this article, we will explain the root cause of hallucination in the "RAG (Retrieval-Augmented Generation)" system, which utilizes internal data, as well as how to technically prevent it and how to cover the parts that cannot be prevented by technology through operations.

"Plausible lies" that trouble digital transformation professionals

Expectations and reality of AI

As digital transformation accelerates, there is increasing pressure from management to dramatically improve operational efficiency by introducing AI like ChatGPT. In the initial demo screen, the AI generates answers in fluent Japanese, appearing at first glance to be the perfect solution.

However, when they actually try to read their company's manuals and regulations, they receive feedback like the following from the field:

"When I asked about the company's rules, I was told how to apply for benefits that don't exist."

"I'm asking about the specifications of product A, but the specs of a similar product B are mixed in."

This is hallucination. AI is not lying with malicious intent. It is simply connecting words that are probabilistically likely to come next, so if the context is natural, it will output content that is not factual.

Risk of hallucination

The seriousness of this problem lies not in the fact that the AI's answers are "clearly wrong," but rather that "at first glance they appear very logical and correct." If busy employees blindly accept the AI's answers and reply to emails or create documents without verifying them, the misinformation will spread quickly throughout the organization, and in the worst case scenario, to customers.

For digital transformation professionals, hallucination prevention is not simply a task of improving accuracy. It is risk management itself, managing the risk that false information will creep into business processes and distort decision-making.

The "RAG Swamp" that many companies face

RAG (Search Augmentation and Generation) is a system that searches for relevant information from an internal database and passes it to LLM as "reference material" to generate fact-based answers. In theory, if the answer is written in the reference data, the AI should be able to answer correctly.

However, the reality is not that simple. Many companies have conducted proof-of-concept (PoC), but the accuracy of the answers plateaus at around 60-70%, and in many cases the project is frozen, with the company deeming the system unusable for business purposes. This is what is known as the "RAG swamp."

Most of the time, hitting a wall in accuracy lies not in the "performance of the AI model" but in the "quality of the data being read" and "insufficient requirements definition." Easy data integration, such as "Just put all the files in SharePoint" or "Just upload a PDF and the AI will read it," fails in most cases. Unlike the structured data (databases and CSVs) that IT staff usually deal with, RAG deals with unstructured data (natural language). This data contains a lot of noise that confuses the AI, such as variations in notation, layout issues, and old document versions.

A typical pattern of failed RAG implementation is not recognizing this "data wall" and thinking that it can be solved simply by introducing the latest LLM model.

▼I want to know more about RAG

⇒ Retrieval Augmented Generation (RAG) | Glossary

Why does hallucination occur even in RAG?

Hallucination in a RAG environment is not a magical failure that occurs in a black box. If we break it down logically, we find that errors occur mainly in either the Retrieval or Generation process, or both.

Search failure: Not finding the right internal documents in the first place

The biggest bottleneck in RAG is not the generation AI, but the search part. This is a phenomenon also known as "Garbage In, Garbage Out: If garbage (= poor quality data) goes in, garbage (= hallucination) comes out."

- Noise: If the old regulations (2019 edition) and the new regulations (2025 edition) are mixed in the same folder, the search engine may mistakenly pick up the older one. The AI will not know that the information it receives is "old" and will respond as the correct answer.

- File name and structure issues: Meaningless file names like "Important Documents.pdf" or PDFs that contain only scanned images and no text information will not be found in the search net.

- Failure to split chunks: In many cases, sentences are split improperly, resulting in the core part of the question being cut off, and the AI receiving incomplete information.

These cases are not cases where the AI has lied, but rather cases where the AI has been given false cue cards (reference materials). To prevent this, it is necessary to review the company's internal document management rules rather than modifying the system.

Failure to produce: The information is there, but the LLM misreads the context or mixes in unnecessary information.

Even if you can find the right document, it doesn't mean that the LLM will be able to handle it correctly.

- Lost in the Middle: When the document to be referenced is too long or multiple conflicting pieces of information are provided, LLMs tend to overlook important information, especially information in the middle of the prompt.

- Regression to training data: If an internal term (e.g., Project X) has the same name as a general term (e.g., Company X), the AI may respond by prioritizing the "general knowledge" it has acquired through prior training, rather than the content of the internal document. This is the phenomenon of "mixing in unnecessary knowledge."

The problem isn't "AI is bad," but "the quality of data and search"

When discussing countermeasures against hallucination, the focus tends to be on "which LLM (GPT, Claude, Gemini, etc.) should be used?" However, in practice, the "quality of the data pipeline" is more important than differences in models. The fundamental principle of data processing mentioned above, "Garbage (poor quality data) in, garbage (hallucination) out," remains unchanged even in the age of AI. Rather than seeking the cause in inexplicable AI behavior, the first step to solving the problem is to identify the blockage in the data flow from an engineering perspective.

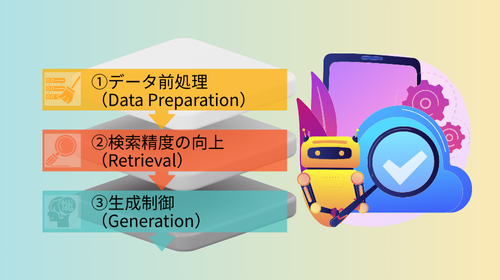

Technical Approach: Three Barriers to Improve Accuracy

To technically contain hallucination, a multi-layered defense approach that creates barriers in all three phases of data preprocessing, search, and generation is effective.

①Data Preparation

It is no exaggeration to say that 80% of the success of RAG depends on the "preparation" before the data is input.

- Appropriately dividing documents (chunking): LLM has a limit (context window) on the number of tokens (number of characters) that it can process at one time. Also, the longer the text, the lower its accuracy becomes. Therefore, it is necessary to divide documents into appropriate chunks (chunks). It is not enough to simply "cut into 500-character chunks," but it is also important to set "semantic chunking," which divides into meaningful chunks (paragraphs), and "overlap," which overlaps previous and following sentences to prevent loss of context.

- Metadata assignment: Relying solely on text search will not improve accuracy. Tags (metadata) such as "creation year," "department," and "document type (regulations, manuals, minutes)" are assigned to files. This allows for filtering during searches, such as "search among accounting department manuals for fiscal year 2025," and physically eliminates old files with the same name and unrelated files from other departments.

- Importance of OCR accuracy: Many Japanese companies face challenges with PDFs scanned from paper documents and complex tables created in Excel. Conventional OCR often destroys the table structure, turning the text into an incomprehensible mess. Having AI read this data can cause chaos. It's necessary to improve data quality and make it easier for AI to understand, such as by using AI-OCR that excels at table recognition, or by using multimodal LLM to convert to Markdown format and maintain the structure.

▼Saison Technology also offers preprocessing applications to improve the accuracy of the generated AI's responses.

⇒ Problem-solving solutions for AI project leaders

②Improvement of search accuracy (Retrieval)

Next, we tune the search engine to find the "correct answer" from the organized data.

- Hybrid search (keyword search x vector search): In the early days of RAG, "vector search" was the mainstream, which quantified the meaning of a sentence and then searched for it, but this had its weaknesses. It was weak in searches that required an exact match, such as "model number (e.g., A-123 and A-124)," and in searches for company-specific abbreviations. On the other hand, traditional "keyword search" would not produce hits unless the words matched (e.g., searching for "PC" would not produce hits for "personal computer"). To compensate for the weaknesses of both methods, "hybrid search," which performs keyword search and vector search in parallel and blends the results, has become the current de facto standard.

- Introducing re-ranking: The process of using AI to carefully re-score and sort the top 20 or so documents found in a search to determine whether they are truly relevant to the question is called "re-ranking." Search engines prioritize speed, so accuracy can be low. However, by incorporating re-ranking, the purity of the information ultimately passed to LLM can be dramatically improved, although the computational cost increases slightly. This is extremely effective in preventing hallucination.

③Generation control

Finally, you control how LLM behaves when generating answers.

- Prompt engineering ("If you don't know, just say you don't know"): System prompts (instructions to the AI) include strict instructions such as "Answer based only on the information (context) provided," and "If there is insufficient information, don't try to answer, just say 'I don't have the information.'" Additionally, a technique called "Chain of Thought" is used, which instructs the AI to "first organize the contents of the reference document, then derive an answer" rather than immediately answering, thereby preventing lies based on logical leaps.

- Citation of source: This technology ensures that the file name and page number of the referenced document are always output at the end of the answer. If it displays something like "There is a provision called XX (Source: Work Rules 2025.pdf p.12)," the user can immediately check the original source. This not only prevents AI from lying, but also gives users a sense of security knowing that they can verify the AI's answers.

Operational approach: Design philosophy that does not aim for 100%

Even if every technical measure is taken, it is impossible to reduce hallucination to zero (0.0%) with current AI technology. This is why IT personnel are required to "not aim for 100%" and instead "design systems that tolerate failure and allow for recovery."

UI/UX innovations that require users to always provide evidence (sources) for their answers

The system screen design (UI) is also important. On the chat screen, a link to the PDF that served as the basis for the AI's answer is always displayed next to the answer. A disclaimer is also always displayed in a prominent location on the screen, stating, "AI may give incorrect answers. Please be sure to check the original text before making important decisions." The key to preventing problems is to give users the mental model that "this system is not a teacher who will tell you the answer, but an excellent assistant who will find materials for you."

Human-in-the-loop (construction of a workflow in which the final check is done by a human)

Incorporate flows into business processes that do not leave everything to AI. For example, even if you have AI draft a response email to a customer, make sure that a human is the one who presses the send button. This is called "human-in-the-loop." In particular, when using RAG in high-risk areas such as contracts or legal matters, it is necessary to establish rules in the business flow that AI remains a "draft creator" and that humans remain the final responsible parties.

Finally

There is no magic "silver bullet" for improving RAG accuracy. Rather than searching for the latest model as a silver bullet, the most reliable countermeasure against hallucination is steady "data maintenance" such as deleting junk files on the company's file server, standardizing file naming conventions, and moving old data to an archive area. For IT personnel, this is not only an AI implementation project, but also a great opportunity to inventory and organize internal data assets that have been neglected for many years.

It's too risky to immediately aim for "expansion to all departments across the company" or "complete automation of customer support." We recommend starting small with areas where users can determine the authenticity of information and where mistakes can be corrected, such as the internal help desk or assistance work for veteran employees.

This involves grasping actual hallucination trends and accumulating operational knowledge (MLOps/LLMOps) such as tuning search dictionaries and improving prompts. This approach of gradually expanding the scope of application may seem like a roundabout way, but it is the shortest route to building a reliable in-house AI.