Developer Blog Series Vol.6 - Data Visualization and Analysis

Vol.06Data governance in data utilization

This is the sixth article on data analysis and visualization.

I attended the keynote speech at Snowflake's event SNOWDAY JAPAN, held on February 14, 2023.

Among the keywords mentioned were anonymization and governance, and I had some thoughts about them, so I decided to write an article about them.

I have to write these things impulsively all at once or they end up shelved, so please forgive me if some parts are difficult to read.

DX Maturity and Governance

As long as a company's DX maturity is still low, speed and early creation of success stories will be key.

It doesn't have to be a huge success, but I think it's important that it's a successful experience that involves the business division.

On the other hand, as maturity increases, governance becomes more important.

Increasing maturity means that the initiative is scaling to the entire company, so I think this is only natural.

Governance tends to be a trade-off with speed, which is a concern for companies looking to increase their maturity.

Recently, we have been considering a service that will allow us to create success stories without slowing down (and avoid any problems later on) by providing a minimum framework for governance.

Regarding governance, I previously wrote an article about thinking about data quality.

This is governance from the perspective of maintaining quality.

This time, I would like to talk about governance from the perspective of data handling (security).

The reference was the Corporate Privacy Governance Guidebook for the Digital Transformation Era, formulated by the Ministry of Economy, Trade and Industry and the Ministry of Internal Affairs and Communications.

FiveSafe Model Data Governance Framework

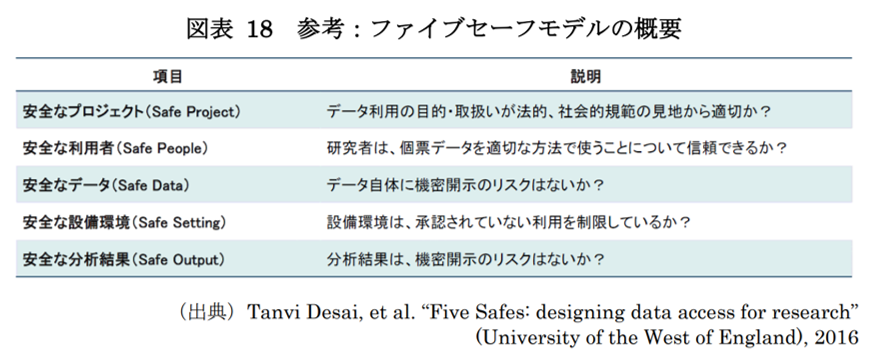

The FiveSafe model is a framework that has been in use since 2003 by the UK Office for National Statistics (ONS) to regulate research using confidential information, and is now widely adopted across the EU as a set of safety rules for the use of individual statistical data.

The contents are as follows. Rather than being an excerpt, most of the information is summarized in this table.

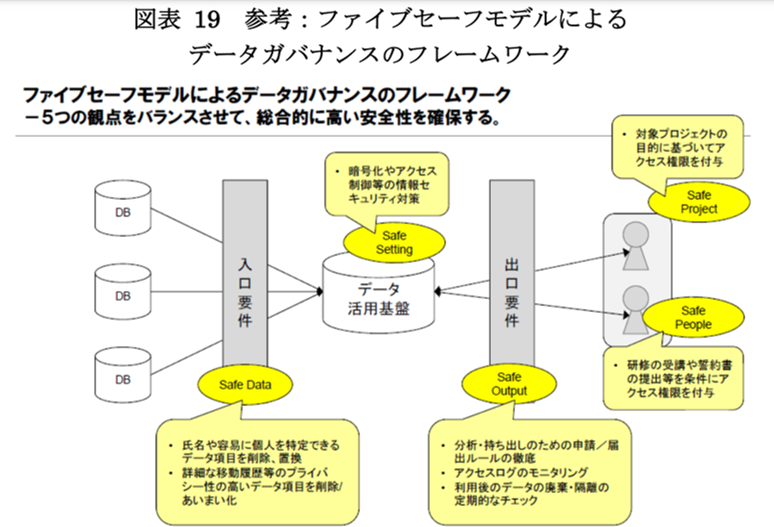

When this is applied to a data analysis platform/data utilization platform/internal data platform, the result is as follows.

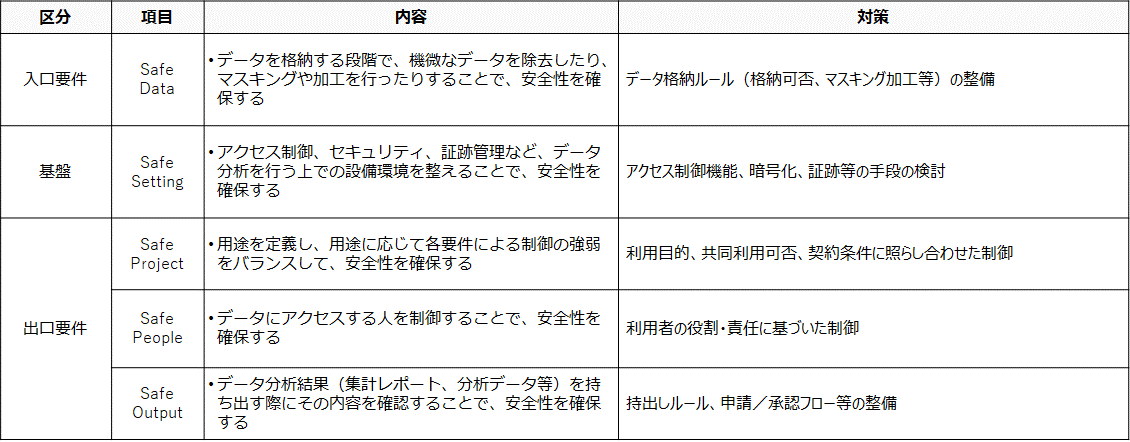

First, it is important to think about the requirements separately, namely, entry requirements, exit requirements, and requirements guaranteed by the foundation.

For example, going back to the topic of Snowflake anonymization, the issue changes depending on whether you are trying to make the anonymization correspond to an entry requirement or an exit requirement (discussed later).

This is not limited to data analysis platforms; I think it could also be a useful way of thinking when trying to create a service on HULFT SQUARE, for example.

The entry requirements will be defined according to the type of data.

The main issue is whether it is acceptable to store this data on an analysis platform in the first place, and if so, to what extent it should be processed (anonymized, deleted, etc.).

The decision will be based on compliance with legal requirements, company policies, and the system's positioning.

The idea is to decide things like how personal information will be handled and how business transactions will be handled.

If anonymization is required as an entry requirement, there may be cases where this cannot be achieved by having the anonymization function built into the infrastructure itself (for example, personal information cannot be sent to the cloud), so an approach that creates an ecosystem in the form of an external component may also be considered.

Exit requirements are basically defined according to the project (purpose and use) and the person (user).

For example, you can define it in terms of whether it is for displaying BI data, or whether this role within the company is for employees of a group company.

Therefore, if you are anonymizing as an exit requirement, you may need to configure it in a detailed manner.

Anonymization as an exit requirement is often included in the infrastructure, including dynamic data masking in DWH.

In addition to meeting entry and exit requirements, the infrastructure itself must also have functions related to access control, security (restricting access sources), and audit trail monitoring.

I think it can be roughly summarized as follows:

Organizing data within this framework can also lead to the establishment of rules for handling data and the development of workflows.

Again, I believe this approach can be applied to more than just data analysis platforms.

I wrote this article with the hope that it will help you organize your thoughts when realizing data-related services, so I hope that you will remember this article if you ever have the opportunity.

That's all for this article.

Thank you for reading to the end.