What is a data infrastructure? Integrating internal and external data and driving its utilization

As the importance of data increases in all businesses, it is now essential to have a system for integrated management of data distributed across multiple systems and departments. A data infrastructure supports this system and utilizes it in a way that can be utilized for business purposes.

This article provides a comprehensive explanation of the basic overview of data infrastructure, the need to build it, and its main components.

Furthermore, based on success stories, we will summarize the key points in building a data infrastructure. Please use this as a reference for the knowledge you need to integrate internal and external data and lead the way in organizational data utilization.

What is a data infrastructure?

The data generated by corporate activities is diverse, including sales, customer management, and sensor information from manufacturing sites.

A data infrastructure is a general term for systems and technologies that enable companies and organizations to collect, store, process, and analyze a variety of data in an integrated manner. It functions as a foundation that efficiently aggregates data distributed across multiple systems and departments and makes it available in a centralized manner. By enabling everyone from management to field personnel to access the same data set, it is expected to dramatically improve cross-departmental collaboration and the speed of decision-making.

Why is a data infrastructure necessary?

Data is a valuable asset that forms the basis of decision-making. Being able to analyze large amounts of data quickly and accurately will provide insights that will be useful in developing new products and services. This article explains the benefits of a data infrastructure from three perspectives: accelerating data utilization, improving business efficiency and reducing costs, and improving data quality and centralizing management.

Data-based policy planning

Having a comprehensive data view makes it easier to quickly introduce new analytical methods and tools. Collecting all data also facilitates cross-analysis and the construction of advanced machine learning models.

For example, cross-sectional analysis of customer data and sales data can clarify purchasing trends for each customer segment, improving the accuracy of targeting new products and marketing initiatives. Having a data infrastructure makes it possible to carry out these tasks efficiently.

Business efficiency

By utilizing integrated data, you can reduce the waste of managing the same data redundantly and make it easier for various departments to share common data, which can significantly reduce the time spent on tasks such as creating reports.

In addition, standardizing analysis tools and report creation will also make it easier to manage tool license and operational costs, which will enable you to promote data utilization while keeping costs down in the long term.

Improved data quality and centralized management

Having a system in place to monitor data for entry errors and duplication improves data quality, and using an integrated database or data warehouse makes it easy to apply automatic validation.

Centralized management also offers security benefits, especially for highly confidential data such as customer information. Centralized management of access rights will contribute to compliance and governance across the organization.

Key components of the data infrastructure

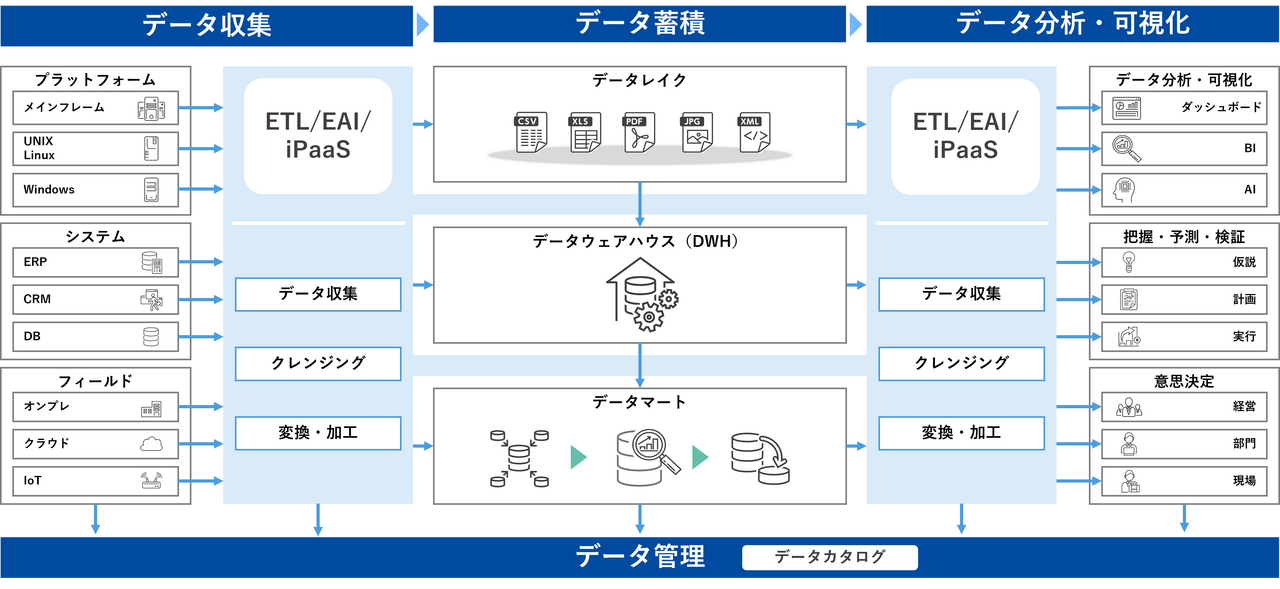

A data infrastructure consists of multiple elements that support a series of processes from collection to storage and analysis. Here, we focus on the main components: data collection, data storage, data processing, data management, analysis, and visualization.

The major role of these elements is to link together and organize data in a way that makes it usable. A data infrastructure that supports corporate growth must be scalable in anticipation of future increases.

Now let's look at each component in turn.

Data collection

Data collection is the process of acquiring and centralizing data from a wide variety of sources, such as sensor information, website activity history, and internal mission-critical system, core system. The key here is to build a system that can efficiently and stably import data. Unstable data acquisition can have a negative impact on analysis results and undermine the reliability of the entire infrastructure.

When importing data, it's important to keep in mind the standardization of connection methods and data formats. Corporate data is often distributed across on-premise, cloud, SaaS, and other platforms, and must accommodate a variety of patterns, such as data acquired via API or as a file. To smoothly integrate data even when the data acquisition methods and formats differ and to flexibly handle the ever-increasing number of data sources, data integration tool such as ETL (Extract/Transform/Load) and iPaaS (Integration Platform as a Service) are commonly used. The differences between data integration tool such as ETL and iPaaS are explained in the following article.

▼I want to know the differences between data integration tool

⇒ "What is system integration? How to choose the best integration method for your company"

Data accumulation

Data lakes, data warehouses, and data marts are commonly used to store collected data. The differences between these are explained below.

Data Lake

Data lakes are used to store raw data (including structured, semi-structured, and unstructured data) in almost its entirety, and can store any type of data, regardless of its format.

Major data lake services: Amazon Simple Storage Service (Amazon S3), Azure Data Lake Storage, Google Cloud Storage, Snowflake

▼I want to know more about data lakes

⇒ Data Lake | Glossary

Data Warehouse (DWH)

It is used to structure and store raw data stored in a data lake for analysis and reporting. In most cases, data processing is required at the data input stage.

Major data warehouse services: Amazon Redshift, Microsoft Azure Synapse Analytics,

▼I want to know more about data warehouses (DWH)

⇒ DWH|Glossary

Data Mart

A collection of data from a data warehouse that is relevant to a specific business department (e.g., marketing, human resources, finance, etc.) and is used to support the department's day-to-day operations and analytical work, focusing on specific business needs.

The data warehouse services mentioned above generally have the ability to extract and organize data, allowing you to create data marts.

On-premise or cloud?

When building and operating a data infrastructure, you must choose whether your company's on-premise environment or the cloud is the most suitable. When building an on-premise environment, it is important to accurately size the system, but it is difficult to predict how much data will be handled in the future, making accurate sizing difficult at an early stage.

As a result, cloud-based managed services, which allow you to flexibly add or remove resources as your business grows and keep initial investments down, are becoming mainstream (the services listed under "Main Services" also fall into this category). Another benefit of cloud-based managed services is that updates are carried out automatically, reducing operational burdens and allowing you to always use the latest technology and environment.

▼I want to know more about on-premise

⇒ On-Premises | Glossary

Data Processing

The data processing and transformation area is responsible for the process of converting collected and stored raw data into a state that can actually be analyzed. The necessary data is separated in the extraction stage, cleansed and formatted in the conversion stage, and finally stored in a data warehouse or similar. By firmly establishing this process, data quality and consistency can be improved, making analysis work more efficient. It is particularly important to detect errors and remove duplicates during conversion to prevent incorrect results from being produced in subsequent analysis.

Data Management

Companies that hold huge amounts of data often face problems with data discovery, analysis, and the reliability of the data itself. Data catalogs are used as a system for organizing, classifying, and managing the data assets held by companies and organizations. Metadata is the data that defines the attributes and characteristics of data, and a data catalog can be thought of as a catalog that manages that metadata.

Metadata describes the structure and attributes of each piece of data, the date and time it was created, who created it, etc. Therefore, by using a data catalog, you can easily find the location of the data you need and use, share, and protect it.

▼I want to know more about data catalog products

⇒ Collect, organize, and catalog scattered metadata: "HULFT DataCatalog"

Data Analysis and Visualization

Ultimately, it is the insights and predictions that are derived from data that create business value. Visualization and analysis are key to how to utilize the clean data created by a data infrastructure.

BI (Business Intelligence) tools are software that extracts necessary metrics from diverse data sets and compiles them into graphs and reports. An increasing number of products can be used without programming skills, providing a foundation for a wide range of employees to utilize data.

Successful data analysis requires an understanding of the business domain. The goal is not simply to visualize the results in graphs and reports; it requires a system for the entire team to share how to interpret the results and what actions to take.

The secret to building a successful data infrastructure

A data infrastructure is not just a matter of technology; it requires a comprehensive approach that includes internal structures and operational processes. It is no exaggeration to say that success depends in particular on how well operations are established after implementation.

By clarifying the data and analysis content that each department wants to use, requirements definition will be smoother and it will be easier to use the system after implementation. At the same time, it is essential to increase the reliability of the data by strengthening security measures and governance.

Key points for success learned from case studies

In the success stories of other companies, it is common for management and each site to align their perspectives from the early stages of the project, set clear KPIs, and regularly share results. Another effective approach is to clarify the requirements definition, establish an operational system, and then start small, expanding gradually.

Successful companies first focus their data infrastructure on one department or issue, gaining understanding and support within the company by achieving early results. By gradually expanding the scope, they are steadily promoting data utilization while reducing the risk of large-scale investment.

On the other hand, there are cases where the purpose of implementation is unclear and only a policy of "let's build a data infrastructure" takes precedence, resulting in a system that is out of sync with on-site needs and ends up becoming a mere formality. To prevent such failures, it is important to clarify the purpose of implementation and firmly establish an operational system and usage plan.

▼I want to know about successful examples of data infrastructure construction

⇒ Nissin Foods Holdings Case Study

"Establishing data integration and analysis platform that utilizes generative AI to contribute to data-driven management"

summary

A data infrastructure is a fundamental system for integrating massive amounts of data both inside and outside a company and generating business value. It is becoming indispensable in the age of big data, and whether a company can utilize this data will determine its competitiveness.

In the construction step, it is necessary to create a consistent process from goal setting and requirements definition, tool selection, implementation, and operation and improvement. The shortcut to success is to work closely with internal stakeholders, including management, along the way, and gradually expand and improve the system.

We hope that you will use the success stories of other companies as a reference to build the optimal data infrastructure for your company and aim to further expand your business.